You probably already tried it.

Somewhere in the last 18 months, someone on your team — or maybe you — pointed a large language model at an OSS/BSS integration problem. Maybe it was a NETCONF adapter for a Nokia 7750 SR. Maybe it was an SNMP trap receiver for a Calix GPON platform. Maybe it was something simpler: a REST poller for your inventory system, or a syslog parser for a legacy Cisco device.

You gave it the MIB file. You described the target system. You asked it to generate the adapter. And it did.

The code looked right. The structure was clean. If you had shown it to a junior engineer without context, they would have said it looked solid.

And then you tried to run it in your environment.

What AI Actually Gets Right

Let me be precise about this, because the failure mode is not what most people think.

Large language models are genuinely good at generating syntactically correct code. They understand SNMP protocol structures. They can read a MIB file and produce reasonable Python. They know what a NETCONF session looks like and can scaffold a YANG model parser that compiles without errors. If you need a starting point — a template, a skeleton, a first draft — an LLM can give you one faster than any developer you have ever worked with.

That is a real capability. It is not nothing. For greenfield development in well-documented technology stacks with clear specifications, AI coding tools have genuinely changed what a small team can produce.

OSS/BSS integration in a production telecom environment is not that.

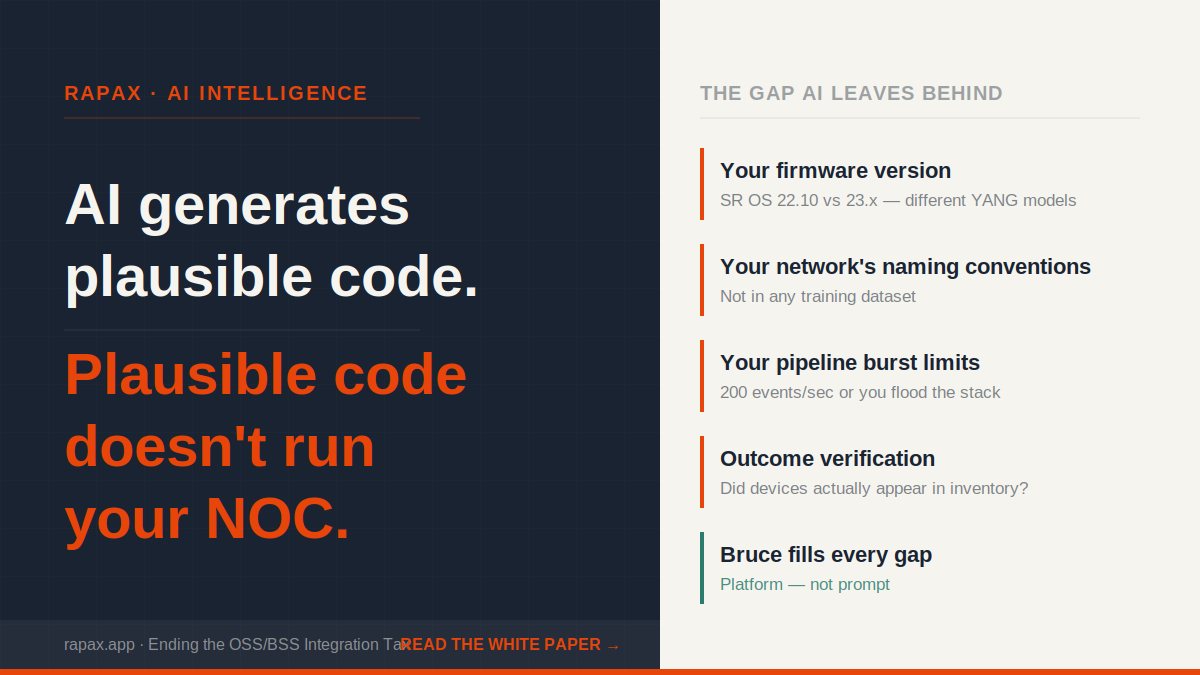

What AI Cannot Know

Here is the gap that every serious telecom engineering team discovers on first contact with AI-generated integration code: the model has no knowledge of your environment.

It does not know that your Nokia 7750 SR is running SR OS 22.10, not 23.x, and that the YANG model for ISIS adjacency changed between those releases. It does not know that your network uses a non-standard SNMP community string schema inherited from a 2019 acquisition. It does not know that your mediation layer drops packets above a certain burst rate, and that your SNMP trap receiver needs to be throttled to 200 events per second or it will overwhelm the pipeline. It does not know that your container registry requires a specific image tagging convention, or that your CI/CD pipeline has a mandatory linting gate that the generated code will not pass.

None of this is in any training dataset. It lives in your environment, in your configuration history, in the institutional memory of your network operations team. An LLM has no path to it.

The result is code that is structurally plausible but operationally ungrounded. It compiles. It might even run. But it does not run reliably in your specific environment, against your specific vendor versions, within your specific operational constraints — and finding out where it breaks requires exactly the kind of deep human expertise that you were hoping the AI would replace.

The Verification Problem

There is a second failure mode that is less obvious but equally damaging: AI-generated integration code has no way to verify its own outcomes.

A deployed integration is not complete when the container starts. It is complete when devices are appearing in inventory, when alerts are flowing through the correlation pipeline, when ticket creation is triggering on the right event types, and when the data arriving in your OSS matches what is happening on the network. Those outcomes require your systems to confirm them — and a language model has no connection to your systems.

This means that every AI-generated integration requires a human to do the verification work that the AI cannot do. Someone has to check whether the Nokia traps are actually arriving and being parsed correctly. Someone has to confirm that the Calix YANG model fields are mapping to the right inventory attributes. Someone has to watch the alert queue long enough to know whether the correlation logic is working. The AI gave you code. You still need an engineer.

In a large organization with dedicated integration engineering resources, this is a manageable handoff. For a Tier 2 or Tier 3 carrier with a two-person integration team and a backlog of six pending integrations, it is not. The AI accelerated the code generation phase and moved the bottleneck to verification — which is already where most of the time was being spent.

Why This Is Not a Criticism of AI

I want to be clear about something: this is not an argument that AI is overhyped or that language models do not belong in a telecom operations stack. I have spent 25 years in this industry and hold 12 patents in network operations and service assurance. We built Rapax as an AI-native platform. AI is central to what we do.

The argument is more specific than that. A large language model deployed as a standalone tool — no environmental context, no operational grounding, no verification loop, no integration history — will not solve your OSS/BSS integration problem. It will generate a plausible-looking starting point and hand the hard part back to you.

The distinction that matters is not AI versus no AI. It is AI-as-tool versus AI-as-platform.

What the Difference Looks Like in Practice

The Bruce capability inside Rapax uses AI as one component of more than ten foundational innovations. The others are what close the gaps that a standalone language model cannot close.

The requirements gathering engine pulls operational context from your previous integrations before it generates a line of code — so a second Nokia integration draws on everything learned in the first. The documentation ingestion pipeline processes your actual MIB files, your specific YANG models, your as-built configurations — not generic vendor documentation. The deployment pipeline commits to your GitHub repository, builds against your container registry, and deploys to your infrastructure. And the verification loop queries Rapax’s network inventory agent to confirm that expected devices have appeared and that alerts are actually flowing — before the integration is marked complete.

That is not AI generating code. That is a platform using AI, alongside environmental context, operational history, and closed-loop verification, to produce an outcome: an integration that runs reliably in your specific environment, verified against your actual network.

The Calix YANG model change that would have taken a standalone AI tool from “generate code” to “run correctly in production” in six weeks — and would have taken a traditional SI firm $180,000 to remediate — took Bruce six hours. Not because the AI is better. Because the platform around the AI is doing the work that AI alone cannot do.

The full architecture — what those eleven innovations are, and why the distinction between an AI tool and a platform that uses AI is the reason one of them works in a production NOC — is in the white paper below.

Download: Ending the OSS/BSS Integration Tax →

If any of this lands and you want to talk about what it means for your operation — 15 minutes at cal.com/shawn-ennis. No prep needed.

Leave a Reply